In the rush to integrate Artificial Intelligence into software development workflows, many engineering leaders fall into a common trap: they treat the Large Language Model (LLM) as the product itself. This misunderstanding leads to misaligned expectations, friction in developer onboarding, and ultimately, suboptimal tooling choices.

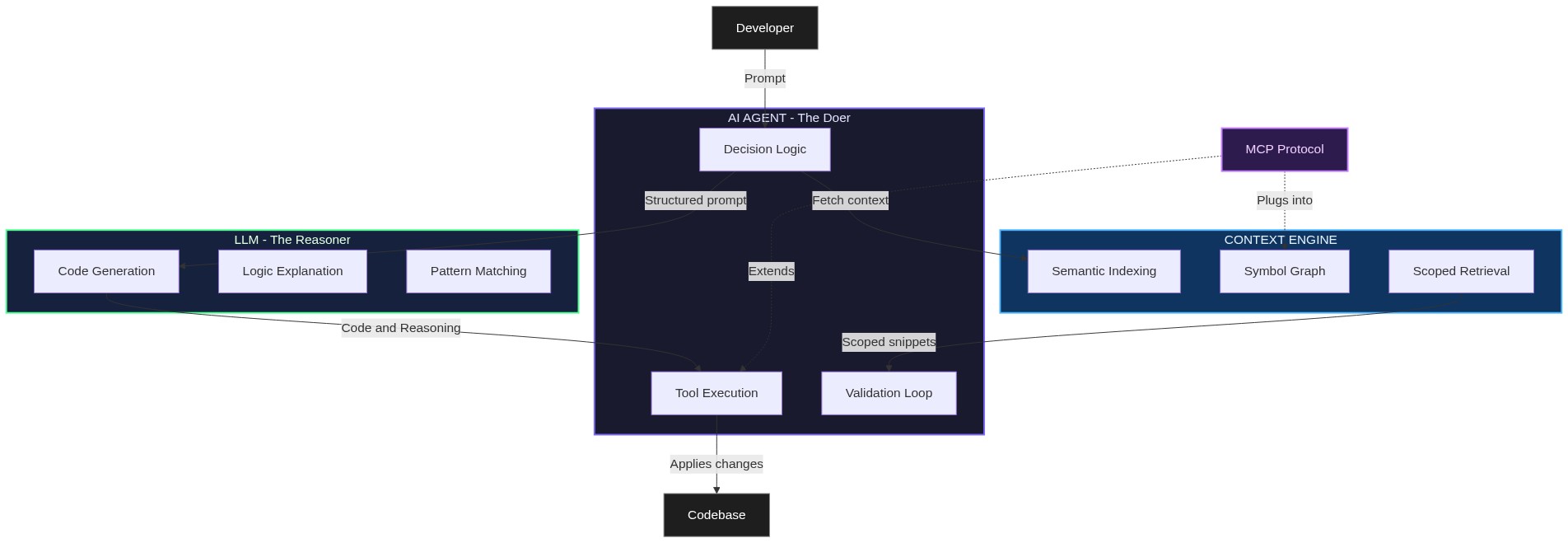

To build or evaluate a truly effective AI development environment, we must look past the model and understand the architectural synergy between three distinct components: the AI Agent, the Context Engine, and the LLM.

Pillar 1: The AI Agent (The “Doer”)

The AI Agent is the active, orchestrating layer of the system. Unlike a standard chatbot, an agent doesn’t just talk about code; it interacts with it. It is the bridge between the developer’s intent and the physical file system.

Core Responsibilities:

- Tool Execution: Reading/writing files, applying diffs, and managing git states.

- Validation Loop: Running tests, linters, and compilers to verify changes before they reach the human reviewer.

- Decision Logic: Determining the sequence of operations (e.g., “I need to read the interface definition before I can implement the class”).

Click to enlarge

Pillar 2: The Context Engine (The “Awareness”)

A common misconception is that LLMs “know” your codebase. In reality, an LLM is a stateless prediction engine. The Context Engine provides the necessary grounding, transforming raw source files into a semantic map that the agent can navigate.

Traditional keyword search is insufficient for complex refactoring. A sophisticated Context Engine uses semantic embeddings and graph-based relationships to answer:

- “Where is this symbol actually instantiated?”

- “What are the downstream dependencies of this module?”

- “What architectural patterns should I follow in this specific directory?”

By providing high-fidelity, relevant snippets, the Context Engine prevents “hallucinations” and ensures the LLM’s output is grounded in your project’s reality.

Pillar 3: The LLM (The “Reasoner”)

The LLM is the brain, but it’s a brain in a jar. Its role is strictly limited to high-level reasoning, language translation (natural language to code), and pattern recognition.

Key Strengths:

- Synthesizing complex logic into readable code.

- Explaining legacy debt or opaque functions.

- Proposing creative solutions based on its vast training data.

However, without the Agent to act or the Context Engine to see, the LLM is just a sophisticated autocomplete.

The Connective Tissue: Model Context Protocol (MCP)

While the three pillars define the architecture, the Model Context Protocol (MCP) is what enables them to scale. Often described as the “USB-C for AI,” MCP standardizes how AI Agents connect to diverse data sources and toolsets.

Before MCP, every AI tool had to build custom integrations for every data source (Jira, GitHub, Slack, Odoo). MCP creates a universal interface, allowing a single Agent to seamlessly pull context from an Odoo instance or a PostgreSQL database without custom glue code.

Summary: Agent-First, Not Model-First

The difference between a “neat toy” and a “force multiplier” lies in the orchestration.

AI Agent

Executes actions and manages tools.

Context Engine

Provides deep, semantic awareness.

LLM

Provides reasoning and generation.